by Anton Bespalov and Anja Gilis

In the dialectics world of the German philosopher Georg Hegel, quantitative changes transform into qualitative changes. This often gets illustrated with the paradox of the heap: if you keep adding grains on the same spot, after a certain number of grains you will get a heap, only it seems impossible to define that number of grains precisely.

Most research institutions have written descriptions of specific work-related processes. Some have more, others have less. Does that mean that the more paperwork you create in the lab describing and regulating various aspects of the research life, the higher is the quality (Q)? The answer is “yes, perhaps”. Defining proper expectations is essential for quality and will support an improvement process but if quality efforts are limited to writing guidelines and procedures only this improvement will rapidly become asymptotic.

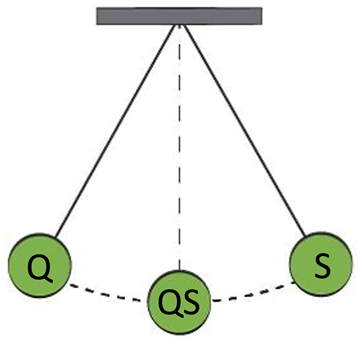

What is missing? Any attempt to introduce rules and standard processes should be accompanied by a feedback mechanism that serves to confirm that the good intentions reach the goal and, if something goes wrong, corrective measures can be applied.

This is exactly how a quality system emerges out of a collection of guidelines, protocols, policies and rules.

But the “control” pendulum can also go in the opposite direction. We would like to share a recent example:

A major CRO providing a wide range of in vitro biology services has a turnaround time of 14 days (time between initiation of a study and availability of study results to the customer). What happens if things get delayed and the results cannot be delivered within 14 days? The customer gets informed via an online message that the study was canceled. And literally a moment later, another notification comes that the study is … back as “active” and the 14 days start to count again. What is the reason? It turns out that the CRO has installed key performance indicators (KPIs) such as the percentage of studies completed within the default turnaround time of 14 days. And it is likely that reward & recognition measures are connected to such KPIs. Whether the customer is happy or not, the system will be seen as performing well as long as the recorded (not necessarily actual) turnaround time is kept within the target.

No system can be perfect and of course nothing dramatic happens if a customer gets results with a few days of delay. This example serves only to warn that the quality system may sometimes become too much a “system” (S) and starts to ignore “quality” (Q). The pendulum has reached another extreme.

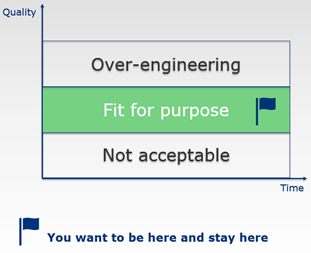

It is not enough to have a feedback mechanism to check whether the processes/KPIs you put in place are being followed. It is equally important to re-ground from time to time and ask the question what quality is all about in your specific situation and to evaluate whether the processes and procedures you have put in place are still the right ones.

Indeed, also quality can be over-engineered!

In the EQIPD Quality System, there are several mechanisms implemented to detect a need to change or to improve. All these mechanisms rely on the process owner (or a dedicated person this task is delegated to) conducting spot checks of key processes. EQIPD does not set and does not expect that a research unit will set numeric targets for “quality”. In fact, especially in the first years of operation under the EQIPD Quality System, errors and deviations from recommended practices are of great value because they allow to test the efficiency of improvement mechanisms and, if adequately recorded and reported, also strengthen the transparent quality culture in the team.

0 Comments

Leave A Comment